作者:张杰,腾讯PCG后台开发工程师

内存泄漏内存泄露,一个老生常谈的问题,但即便是老手也会犯一些低级错误。如果没有可靠的研发流程保证在测试阶段发现问题,问题就容易被带到线上。计算资源始终是有限的,问题也不会因为资源充裕就消失不见,产生影响只是时间问题。影响有多大,就要结合场景来说了。

内存泄漏,最可能的影响就是内存申请失败。但实际上操作系统更聪明,结合系统整体负载情况,它会为每个进程计算一个oom_score,并在内存资源紧张时选择一个合适的进程杀死并回收内存资源,seehowdoestheoomkillerdecidewhichprocesstokillfirst。

所以,内存泄露的最终结果,大概率会被操作系统kill,通常进程挂掉后,确认其是否是因为oom问题被kill,可以通过查看/proc/messages来确认是否有对应日志。有的话,那就坐实了oomkilled(但是被oomkilled的进程不一定意味着存在内存泄露)。

服务质量结合运维手段的变化,来看看是否内存泄漏问题对服务质量造成的影响。

传统人工方式,通过感知告警、人为介入这种方式,效率低,要十几分钟;

通过虚拟机自动化部署的方式,感知异常自动重启虚拟机,耗时大约要分钟级;

通过docker容器化部署的方式,感知异常自动重启容器,耗时大约在秒级;

看上去现代运维方式一定程度上可以缓解这个问题,是,这也要分情况:

如果内存泄露的代码路径不容易被触发,那可能要跑很久才能触发oomkill,如一周;但是如果代码路径在关键代码路径上,且请求量大,频繁触发内存泄露,那可能跑个几分钟就会挂掉;

跟每次内存泄露的内存大小也有关系,如果泄露的少,多苟活一阵子,反之容易暴毙;

进程一旦挂掉,这段时间就不能响应了,服务的健康监测、名字服务、负载均衡等措施需要一段时间才能感知到,如果请求量大,服务不可用依然会带来比较大的影响。

服务质量保证是不变的,所以别管用了什么运维手段,问题终究是问题,也是要解决的。

Go内存泄漏垃圾回收自动内存管理减轻了开发人员管理内存的复杂性,不需要像C\C++开发者那样显示malloc、free,或者new、delete。垃圾回收借助于一些垃圾回收算法完成对无用内存的清理,垃圾回收算法有很多,比如:引用计数、标记清除、拷贝、分代等等。

Go中垃圾回收器采用的是“并发三色标记清除”算法,see:

GarbageCollectionInGo:PartI-Semantics

GarbageCollectionInGo:PartII-GCTraces

GarbageCollectionInGo:PartIII-GCPacing

Go语言支持自动内存管理,那还存在内存泄漏问题吗?

理论上,垃圾回收(gc)算法能够对堆内存进行有效的清理,这个是没什么可质疑的。但是要理解,垃圾回收能够正常运行的前提是,程序中必须解除对内存的引用,这样垃圾回收才会将其判定为可回收内存并回收。

内存泄漏场景实际情况是,编码中确实存在一些场景,会造成“临时性”或者“永久性”内存泄露,是需要开发人员加深对编程语言设计实现、编译器特性的理解之后才能优化掉的,see:gomemoryleakingscenarios。

即便是临时性内存泄漏,考虑到有限的内存资源、内存申请大小、申请频率、释放频率因素,也会造成进程oomkilled的结果。所以,开发人员对待每一行代码还是要心存敬畏,对待内存资源也还是要慎重。

常见的内存泄露场景,go101进行了讨论,总结了如下几种:

Kindofmemoryleakingcausedbysubstrings

Kindofmemoryleakingcausedbysubslices

Kindofmemoryleakingcausedbynotresettingpointersinlostsliceelements

Realmemoryleakingcausedbyhanginggoroutines

Realmemoryleakingcausedbyusingfinalizersimproperly

Kindofresourceleakingbydeferringfunctioncalls

简单归纳一下,还是“临时性”内存泄露和“永久性”内存泄露:

临时性泄露,指的是该释放的内存资源没有及时释放,对应的内存资源仍然有机会在更晚些时候被释放,即便如此在内存资源紧张情况下,也会是个问题。这类主要是string、slice底层buffer的错误共享,导致无用数据对象无法及时释放,或者defer函数导致的资源没有及时释放。

永久性泄露,指的是在进程后续生命周期内,泄露的内存都没有机会回收,如goroutine内部预期之外的for-loop或者chanselect-case导致的无法退出的情况,导致协程栈及引用内存永久泄露问题。

内存泄露排查初步怀疑程序存在内存泄露问题,可能是因为进程oomkilled,或者是因为top显示内存占用持续增加无法稳定在一个合理值。不管如何发现的,明确存在这一问题之后,就需要及时选择合适的方法定位到问题的根源,并及时修复。

借助pprof排查pprof类型go提供了pprof工具方便对运行中的go程序进行采样分析,支持对多种类型的采样分析:

goroutine-stacktracesofallcurrentgoroutines

heap-asamplingofallheapallocations

threadcreate-stacktracesthatledtothecreationofnewOSthreads

block-stacktracesthatledtoblockingonsynchronizationprimitives

mutex-stacktracesofholdersofcontedmutexes

profile-cpuprofile

trace-allowscollectingalltheprofilesforacertainduration

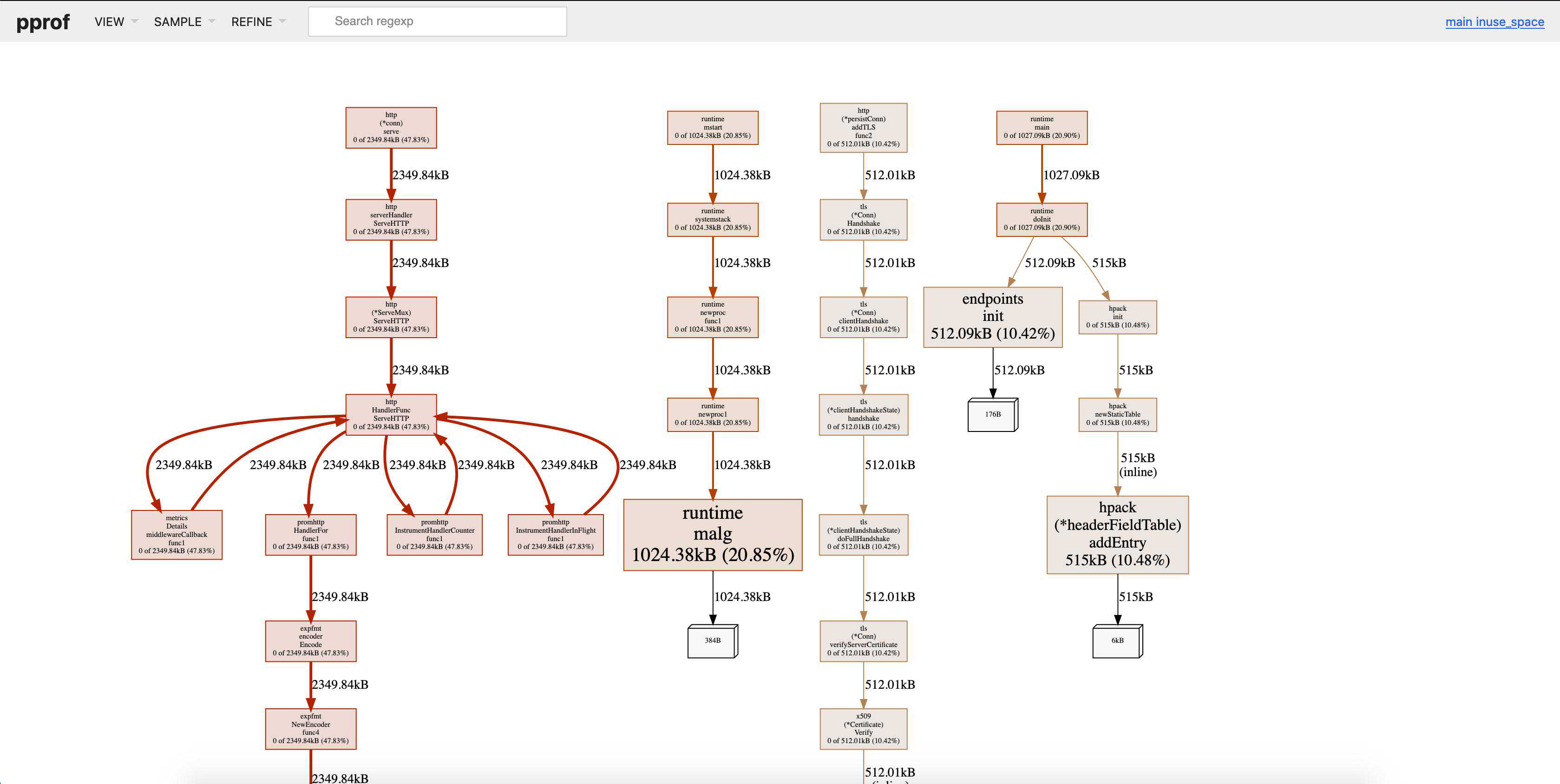

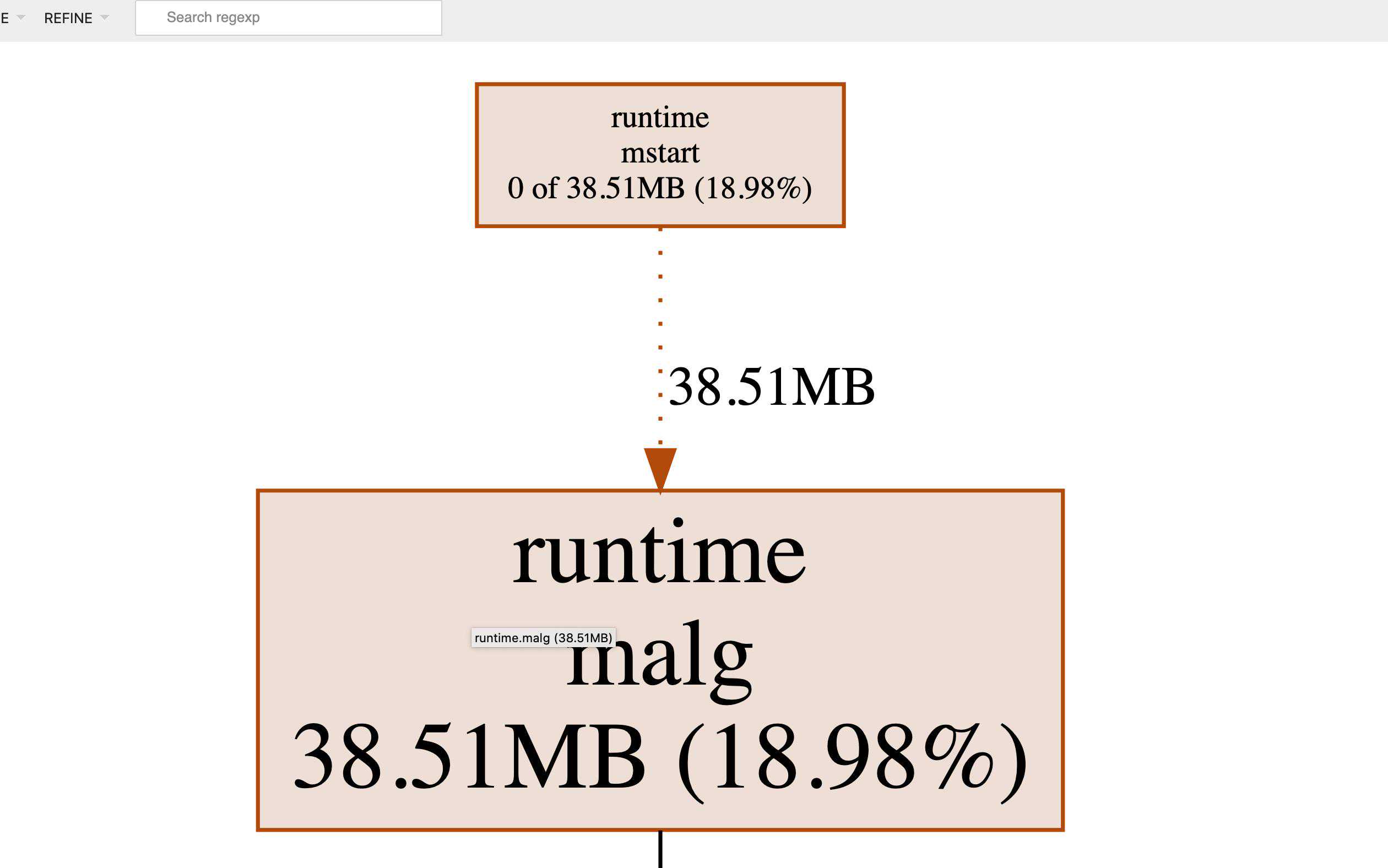

pprof操作现在很多rpc框架有内置管理模块,允许访问管理端口通过/debug/pprof对服务进行采样分析(pprof会有一定的性能开销,最好分析前将负载均衡权重调低)。

集成pprof非常简单,只需要在工程中引入如下代码即可:

import_"net/http/pprof"gofunc(){(("localhost:6060",nil))}()然后运行gotoolpprof进行采样:

gotoolpprof-seconds=10-http=:9999http://localhost:6060/debug/pprof/heap

有时可能存在网络隔离问题,不能直接从开发机访问测试机、线上机器,或者测试机、线上机器没有安装go,那也可以这么做:

curlhttp://localhost:6060/debug/pprof/heap?seconds=30:="""includelinux///formode_tintkprobe__sys_open(structpt_regs*ctx,char__user*pathname,intflags,mode_tmode){bpf_trace_printk("sys_opencalled.\\n");return0;}"""b=BPF(text=program)_print()运行它:

nbsp;

OK,BCC套件里面提供了工具memleak,用来对内存泄露进行分析,下面结合一个cgo内存泄露的示例分析,来了解下如何是使用。

建议能花点时间了解下linuxtracingsystems,seelinuxtracingsystemshowtheyfittogether,理清下kprobe/uprobe/dtraceprobes/kerneltracepoints的含义及工作原理,进而才能认识到eBPF的强大之处,不再展开了,看个示例。

BCC:内存泄露示例下面先看一个cgo示例工程是如何组织的,示例项目取自,您可以直接从这里下载。

c-so/├──Makefile├──add│├──Makefile│├──│└──src│├──│└──└──

上述工程中,add/src/下/实现了一个add函数,add/中定义了可导出的函数Add(a,bint)int,内部通过cgo调用src下定义的intadd(int,int),add/Makefile将把add下的源文件整个编译构建打包成一个共享库文件,供c-so/调用。

c-so/引用目录add下定义的packageadd中的Add函数,c-so/Makefile只是简单的gobuild编译动作,编译完成后./c-so运行会提示库文件不存在,这是因为库路径加载问题,执行LD_LIBRARY_PATH=$LD_LIBRARY_PATH:$(pwd-p)./c-so即可,程序正常运行。

OK,现在简单地篡改下src/,将其内容修改如下,插入了一段不停申请内存的代码:

(inta,intb){/*******insertmemoryleakagestart********/inti=0;intmax=0x7fffffff;for(;imax;i++){int*p=(int*)malloc(sizeof(int)*8);sleep(1);if(i%2==0){free(p)}}/*******insertmemoryleakage********/returna+b;}现在重新执行make编译之后,再次运行,程序不断地malloc但是从来不free,内存一点点被泄露,现在我们看看如何借助memleak分析内存泄露的位置:

nbsp;/usr/share/bcc/tools/memleak-p$(pidofc-so)

运行一段时间以后,memleak报告了内存分配的情况,显示的是“top10的还没有释放的内存分配”的位置信息:

从memleak报告的最后一条信息来看:

c-so这个程序运行过程中,调用了共享库中的add函数;

这个add函数执行了345+次内存分配操作,每次申请sizeof(int)*8bytes,总共分配了11048次内存;

内存分配malloc操作的位置大约就是add函数起始处+0x28的指令位置,可以通过求证。

现在我们可以看到内存分配的位置、次数、内存数量,但是这个报告中报道的并非实际泄露的内存数量,比如我们也有free,怎么没有统计到呢?运行memleak-h查看下有哪些选项吧!

nbsp;/usr/share/bcc/tools/memleak-p$(pidofc-so)-t

现在可以看到报告信息中包含了allocentered/exited,freeentered/exited,可以断定memleak也跟踪了内存释放,但是这里的报告还是不够直观,能否直接显示泄露的内存信息呢?可以但是要稍微修改下,下面看下实现,你会发现现有的报告信息也不妨碍分析。

bcc/memleak实现不看下源码,总感觉心里有点虚,看下memleak这个eBPFprogram中的部分逻辑:

跟踪malloc:

intmalloc_enter(structpt_regs*ctx,size_tsize)\-staticinlineintgen_alloc_enter(structpt_regs*ctx,size_tsize):内部会更新被观测进程已分配的内存数量(sizes记录)intmalloc_exit(structpt_regs*ctx)\-staticinlineintgen_alloc_exit(structpt_regs*ctx)\-staticinlineintgen_alloc_exit2(structpt_regs*ctx,u64address):内部会记录当前申请的内存地址(allocs记录)\-stack__stackid(ctx,STACK_FLAGS):记录当前内存分配动作的调用栈信息(allocs中记录)

跟踪free:

intfree_enter(structpt_regs*ctx,void*address)\-staticinlineintgen_free_enter(structpt_regs*ctx,void*address):从allocs中删除已经释放的内存地址

memleak周期性地对allocs进行排序,并按照sizes分配内存多少降序排列打印出来,因为memleak同时跟踪了malloc、free,所以一段时间后,周期性打印的内存分配调用栈位置,即可以认为是没有释放掉(泄露掉)的内存分配位置。

借助pmap/gdb排查这也是一种比较通用的排查方式,在排查内存泄露问题时,根据实际情况(比如环境问题无法安装go,bcc之类分析工具等等)甚至可考虑先通过pmap这种方式来分析一下。总之,灵活选择合适的方式吧。

内存及pmap基础进程中的内存区域分类可以按下面几个维度来划分,如果对这个不熟,建议参考以下文章,see:

MemoryTypes

UnderstandingProcessMemory

ManagingMemory

PrivateSharedAnonymousstack

malloc

mmap(anon+private)

brk/sbrkmmap(anon+shared)File-backedmmap(fd,private)

binary/sharedlibrariesmmap(fd,shared)

借助pmap可以查看进程内存空间分布情况,包括地址范围、大小、内存映射情况,如:

nbsp;pmap-ppid/proc/pid/smapsAddressKbytesRSSDirtyModeMapping0000000000400000444r-x--blah0000000000401000444rw---blah00007fc3b50df000512005120051200rw-s-zero(deleted)00007[anon]00007[anon]00007fc3b8886000888rw---[anon]00007[anon]00007fff7e6ef0001321212rw---[stack]00007fff7e773000440r-x--[anon]ffffffffff600000400r-x--[anon]----------------------------------totalkB5584

上述命令只是输出信息的详细程度不同,在我们理解了进程的内存类型、pmap的使用之后,就可以对发生内存泄露的程序进行一定的分析。

排查示例:用例准备比如现在写一个测试用的程序,目录结构如下:

leaks|--conf|`--|--|--leaks|--`--task`--

file:,该文件启动conf、task下的两个逻辑,中启动一个循环,每次申请1KB内存并全部设置为字符C,启动一个循环,每次申请1KB内存并设置为字符T。循环体每次迭代间隔1s,循环体每次迭代间隔2s。

packagemainimport("leaks/conf""leaks/task")funcmain(){("aaa")("bbb")select{}}file:conf/:

packageconfimport("time")typeConfigstruct{AstringBstringCstring}funcLoadConfig(fpstring)(*Config,error){kb:=110gofunc(){for{p:=make([]byte,kb,kb)fori:=0;ikb;i++{p[i]='C'}(*1)println("conf")}}()returnConfig{},nil}file:task/

packagetaskimport("time")typeTaskstruct{AstringBstringCstring}funcNewTask(namestring)(*Task,error){kb:=110//startasyncprocessgofunc(){for{p:=make([]byte,kb,kb)fori:=0;ikb;i++{p[i]='T'}(*2)println("task")}}()returnTask{},nil}然后编译构建gobuild输出可执行文件leaks,大家可能注意到了,我这样的写法并没有什么特殊的,是会被garbagecollector回收掉的,顶多是回收快慢而已。

是的,为了方便我们解释pmap排查方法的运用,我们假定这里的内存泄露掉了,怎么个假定法呢?我们关闭gc,运行程序的时候GOGC=off./leaks.

你可以用top-p$(pidofleaks)验证下RSS飞涨。

排查示例:搜索可疑内存区比如,你发现有段anon内存区域,它的占用内存数量在增加,或者这样的区段数量再增加(可以对比前后两次的pmap输出来发现):

nbsp;pmap-x$(pidofleaks)1.txtnbsp;pmap-x$(pidofleaks)2.txt86754:./leaks/leaks86754:./leaks/leaksAddressKbytesRSSDirtyModeMappingAddressKbytesRSSDirtyModeMapping0000000000720r-x--leaks0000000000720r-x--leaks000000000045d0004964760r----leaks000000000045d0004964760r----leaks00000000004d9000161616rw---leaks00000000004d9000161616rw---leaks00000000004dd0001763636rw---[anon]00000000004dd0001763636rw---[anon]000000c00000000098508rw---[anon]|000000c0000000002104652rw---[anon]00007f26010ad0003981632363236rw---[anon]|00007f26010ad0003981634323432rw---[anon]00007f260378f00026368000-----[anon]00007f260378f00026368000-----[anon]00007f261390f000444rw---[anon]00007f261390f000444rw---[anon]00007f2656400-----[anon]00007f2656400-----[anon]00007f26257bf000444rw---[anon]00007f26257bf000444rw---[anon]00007f26257c00003669200-----[anon]00007f26257c00003669200-----[anon]00007f2627b95000444rw---[anon]00007f2627b95000444rw---[anon]00007f2627b96000458000-----[anon]00007f2627b96000458000-----[anon]00007f262800f000444rw---[anon]00007f262800f000444rw---[anon]00007f262801000050800-----[anon]00007f262801000050800-----[anon]00007f262808f0003844444rw---[anon]00007f262808f0003844444rw---[anon]00007ffcdd81c0001321212rw---[stack]00007ffcdd81c0001321212rw---[stack]00007ffcdd86d0001200r----[anon]00007ffcdd86d0001200r----[anon]00007ffcdd870000840r-x--[anon]00007ffcdd870000840r-x--[anon]ffffffffff600000400r-x--[anon]ffffffffff600000400r-x--[anon]--------------------------------------------------------------------------totalkB7701868|totalkB7708208

我们注意到起始地址为000000c000000000和00007f26010ad000的区间,RSS内存数量涨了,这说明这里物理内存占用增加了,在明确程序存在内存泄露的前提下,这样的内存区域可以作为可疑内存区去分析一下。或者,是有连续的大内存区块,也是待分析的可疑对象,或者这样的内存区块数量比较多,也应该作为可疑的分析对象。

找到可疑内存区域之后,就尝试里面的内容导出,导出后再借助strings、hexdump等工具进行分析,通常会打印出一些字符串相关的信息,一般这些信息会帮我们联想起,这些数据大约对应着程序中的哪些数据结构、代码逻辑。

先执行gdb-p$(pidofleaks)attach目标进程,然后执行下面两条命令导出可疑内存区:

+131072*1024+39816*1024

然后尝试用strings或者hexdump

nbsp;[0;34m\]\W\[$(git_color)\]$(git_branch)\[\e[0;37m\]$\[\e[0m\]SXPFDEXPFe[0;34m\]\W\[$(git_color)\]$(git_branch)\[\e[0;37m\]$\[\e[0m\]SXPFDEXPFTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCCTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTTT

or

nbsp;|5C..|0008e0000000000000000000000000|.|0008e05000000000000000000000000000000000|.|*0009000000e00800c00000000000000000000000|.|0009001000000000000000000000000000000000|.|*0010000054545454545454545454545454545454|TTTTTTTTTTTTTTTT|*0020000043434343434343434343434343434343|CCCCCCCCCCCCCCCC|*005454545454545454545454545454|TTTTTTTTTTTTTTTT|*0050000043434343434343434343434343434343|CCCCCCCCCCCCCCCC|*0070000054545454545454545454545454545454|TTTTTTTTTTTTTTTT|*004343434343434343434343434343|CCCCCCCCCCCCCCCC|*00a0000054545454545454545454545454545454|TTTTTTTTTTTTTTTT|*00b0000043434343434343434343434343434343|CCCCCCCCCCCCCCCC|*00d0000054545454545454545454545454545454|TTTTTTTTTTTTTTTT|*00e0000043434343434343434343434343434343|CCCCCCCCCCCCCCCC|*0100000054545454545454545454545454545454|TTTTTTTTTTTTTTTT|*

通过这里的输出,假定这里的输出的一些字符串信息CCCCCCCorTTTTTTTTT是一些更有意义的信息,那它可能帮助我们和程序中的一些数据结构、代码逻辑建立起联系,比如看到这里的字符串C,就想到了配置加载,看到字符串T,就想到了,然后进去追查一下一般也能定位到问题所在。

使用gcore转储整个进程,原理类似,gcore会在转储完后立即detach进程,比手动dump速度快,对traced进程的影响时间短,但是转储文件一般比较大(记得ulimit-c设置下),core文件使用hexdump分析的时候也可以选择性跳过一些字节,以分析感兴趣的可疑内存区。

其他方式内存泄露的排查方式有很多,工具也有很多,比如比较有名的valgrind,但是我测试过程中,valgrind没有像bcc那样精确地定位到内存泄露的位置,可能是我的使用方式有问题。seedebuggingcgomemoryleaks,感兴趣的可以自己研究下。这里就不再展开了。

总结本文介绍了内存泄露相关的定位分析方法,虽然是面向go开发介绍的,但是也不局限于go,特别是ebpf-memleak的应用,应用面应该会比较广。eBPF对Linux内核版本是有严格要求的,使用过程中也需要注意,eBPF的优势在于它为观测、测量提供了强大的基础支持,所以bcc才会有那么多的分析工具,是不可多得利器。

本文也算是自己对eBPF的一个初步尝试吧,希望掌握它对自己以后的工作有帮助。开发人员手上可以用的工具不少,但是真的好用、省心的也没有那么多,如果能bcc一行代码定位到位置,我想我也不会愿意pmap、gdbgcore、gdbdump、strings+hexdump来分析内存泄露位置,当然如果情况不允许,比如内核版本不支持bcc,那还是灵活选择合适的方式。

参考内容memoryleaking,

golangmemoryleaks,.

findingmemoryleakincgo,

dive-into-bpf,

introductiontoxdpandebpf,

debuggingcgomemoryleaks,

choosingalinuxtracer,

tamingtracepointsinthelinuxkernel,

linuxtracingsystemshowtheyfittogether,